How did agile software engineering practices evolve? What were the initial software engineering practices, what were they based on, and what were the challenges that brought about the development of aging practices?

How did agile software engineering practices evolve? What were the initial software engineering practices, what were they based on, and what were the challenges that brought about the development of aging practices?

This article will provide a brief introduction of how agile came to be developed. Note that agile methodologies are tools, like for example, writing. One doesn’t need to know that the Sumerians invented writing in the 4th millennium BCE to be able to send an email, however understanding how writing evolved to solve communications problems makes one a better writer. Similarly, an insight the development of agile will frame the types of problems it was designed to solve and provide a better conception of using the tool.

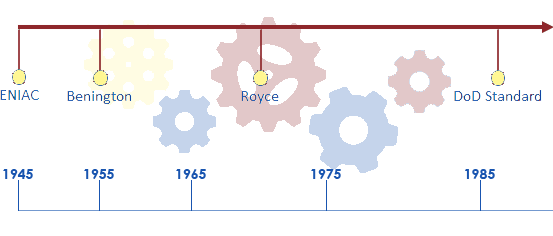

In the Beginning There Was ENIAC

The first true general-purpose computer was the Electronic Numerical Integrator and Computer, better known as ENIAC. It was a project sponsored by the US Army and created at the University of Pennsylvania in Philadelphia. The production launch of ENIAC was shortly after the end of WW2, approximatly12-Dec-1945. It was built using “electronics by the pound”, that is vacuum tubes, accumulators, physical switches, and other “hard” hardware.

ENIAC was a fully programmable and Turing Complete machine. Although it could perform many of the computing operations we would recognize today such as branching, loops, series of steps, and sub-routines, programing was a slow and difficult process that produced little functionality for a significant amount of work. Programs were input on plugboards and a set of three function panels that had 1200 ten way switches each. Computing problems had to be worked out on paper and then mapped to the plugboard/switch settings. Once done, the settings had to be physically input into the machine, a difficult and mistake prone operation. Given the challenges of programming and the costs of operating the machine significant amount of testing would be performed prior to execution.

Processing power was limited, and as such the problems that could be automated on ENIAC were fairly straight forward and easily definable.

Given these limitations, software engineering, in the early days:

- Followed models from other physical/industrial engineering disciplines.

- Problems to be solved were simple and could be completely and clearly documented in advance.

- Solutions were designed and validated on paper before any physical programming was executed.

- Programming was performed according to a highly detailed plan.

- Significant formal testing was conducted of individual components as well as the full process, prior to execution of the runs.

This process should be completely familiar to any of us who have worked on projects that used classic software engineering practices, that is requirements, design, build, test, and production in a rigid sequence. These methodologies worked well as long as the goals and solution were simple, well understood, and could be completely defined in advance.

As the power of computers evolved software became more complex. Given the expense of machine time and effort needed to generate programs, software engineering continued to follow these engineering/manufacturing models. Building software, the same way a bridge is built, allowed for little change or flexibility late into the effort. Since requirements were generally simple and clearly understood in advance, this was not necessarily a problem.

Early Software Engineering Developments

The first real codification of the early standard software engineering method was in 1956 when Herbert Benington presented a formal process to the Symposium on Advanced Programming Methods for Digital Computers sponsored by the United States Navy. The process, calling for a structured nine phased approach, was not that different from the original engineering procedure used on ENIAC. It outlined the rigid steps that needed to be completed in sequence to develop a computer program.

Although the methodology had already been widely adopted, the first mention of the term Waterfall was in a paper presented by Winston Royce to IEEE in 1970. Notable, is that Royce stated that the process is risky and invited failure. Despite Royce’s warning waterfall increasing became the standard approach. By 1985, the Department of Defense indicated that it was the standard for production of systems for the military. Like much in computing and project management, the Department of Defense drove wide adoption of processes and techniques.

The waterfall methodology had some advantages:

- Followed tried and true process models from engineering and manufacturing and allowed for division and specialization of labor.

- Produced comprehensive documented plans on how to execute the solution.

- Allowed for contracts that clearly defined what was to be produced.

- Produced good results as long as assumptions and requirements were completely understood and changed little.

The limited available processing power of the machines kept complexity of what could be done relatively low. As such, the classic process provided sufficient success to be the model for the early development of information technologies.

Nothing Ever Stays the Same

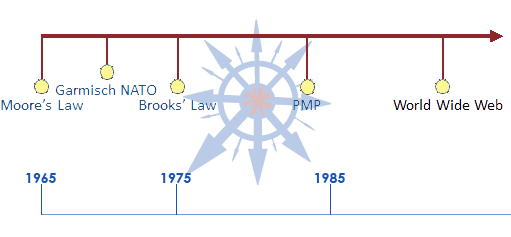

Change is the only constant and Moore’s law is relentless. The increasing power of computers expanded the tasks that could be solved using computers. Growing complexity heightened the risk that assumptions and requirements were neither complete or correct. The predictability and immutability of assumptions and requirements was critical to the classically inspired engineering approach. This pre-requisite was beginning to fray.

Concerns were becoming evident. In 1968, a NATO Software Engineering Conference was held in Garmisch, Germany. As a conference attended by international experts in computer software, among its goals was to formally define what software engineering best practices were. The conference produced a significant paper on the current state of software engineering. It was widely observed that there existed a software crisis, especially with larger systems, indicated by frequent blown budgets, delayed releases, and outright failures of software projects. Of interest, it noted that there was an increasing importance for the education of trained software developers to meet increasing demand.

Application of classic engineering projects were beginning to produce counterintuitive results. In 1975, Fred Brooks released his seminal book, “The Mythical Man Month” based on his experience managing the IBM OS/360 project. He observed from first-hand knowledge: “Adding manpower to a late software project makes it later.” This was an early indication that classic engineering practices were beginning to fail and may not actually apply to software.

Although there were attempts to solve the process through tools and evolving more sophisticated management paradigms such as PMI’s PMBOK, the problems not only defied solution but continued to worsen.

Along comes the World Wide Web and the dot.com boom. As a consequence, there Is an explosion of software being demanded and built. The competitive advantage of time to market accelerates. Business models are emerging, evolving, and changing rapidly. Increasing power of development tools such as object-oriented languages magnified software complexity. A perfect storm had struck, and the software industry was approaching chaos. The idea that one can build software like building a bridge was falling apart.

Complexity to Chaos

People were trying to adapt. Brooks’ observations from “The Mythical Man Month” were being used to change the way software was engineered. Thought leaders were beginning to realize that software was a different kind of artifact from those produced by classic engineering.

Early iterative approaches were developed in the late 1980’s and early 1990’s by primarily Barry Boehm and James Martin. The theory was that knowledge gained from the development process can be used to refine requirements and the solution’s design. Rapid Applications Development (Martin) and the Spiral Model (Boehm) processes attempted to produce semi-functioning software for quick presentation to users/customers and facilitate early validation of requirements. It was found to be superior to the development of complete abstract requirements documentation prior to build. These new methods were popular but problematic as they tended to deliver a series of prototypes instead of truly working software, especially for larger systems. Lack of comprehensive architectural vision caused the approach to be limited to small and mid-size solutions. Although an improvement, there still appeared to be a complexity threshold that could not be crossed.

Another promising contender, formally introduced in 1994, was Rational Unified Process (RUP), developed by Rational Software and later adopted by IBM. RUP employed an iterative development process to push requirements gathering later in the development process, theoretically reducing risk. It resolved some problems but still was a sequential approach which suffered from some of the same issues as waterfall. However, between RAD, Spiral, and RUP the direction was becoming clearer that iterative methods that put software in front of users as early as possible was an encouraging direction.

Next Time: From Crisis of Chaos to the Agile Manifesto

We will continue our historical exploration of the development of agile software engineering. The article will be published shortly so sign up for the PointProx mailing list to make sure you get a notification as soon as the article is published. The part 2 of this brief history will explore the emergence of lightweight methodologies and the evolution to the Agile Manifesto.

References

- Herbert Benington: Large_Programs for Control and Processing

- Barry Boehm: Incremental Commitment Spiral Model, The: Principles and Practices for Successful Systems and Software

- Barry Boehm: Spiral Model Overview

- NATO Science Committee Garmisch, Germany 1968

- Fred Brooks: Mythical Man-Month

- Project Management Institute: A Guide to the Project Management Body of Knowledge, Sixth Edition

Leave a Reply